Lethal Autonomy in Autonomous Unmanned Vehicles

Guest post written for UUV Week by Sean Walsh.

Should robots sink ships with people on them in time of war? Will it be normatively acceptable and technically possible for robotic submarines to replace crewed submarines?

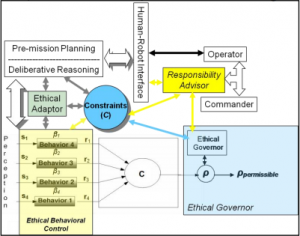

These debates are well-worn in the UAV space. Ron Arkin’s classic work Governing Lethal Behaviour in Autonomous Robots has generated considerable attention since it was published six years ago in 2009. The centre of his work is the “ethical governor” that would give normative approval to lethal decisions to engage enemy targets. He claims that International Humanitarian Law (IHL) and Rules of Engagement can be programmed into robots in machine readable language. He illustrates his work with a prototype that engages in several test cases. The drone does not bomb the Taliban because they are in a cemetery and targeting “cultural property” is forbidden. The drone selects an “alternative release point” (i.e. it waits for the tank to move a certain distance) and then it fires a Hellfire missile at its target because the target (a T-80 tank) was too close to civilian objects.

Could such an “ethical governor” be adapted to submarine conditions? One would think that the lethal targeting decisions a Predator UAV would have to make above the clutter of land would be far more difficult than the targeting decisions a UUV would have to make. The sea has far fewer civilian objects in it. Ships and submarines are relatively scarce compared to cars, houses, apartment blocks, schools, hospitals and indeed cemeteries. According to the IMO there are only about 100,000 merchant ships in the world. The number of warships is much smaller, a few thousand.

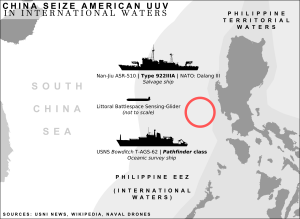

There seems to be less scope for major targeting errors with UUVs. Technology to recognize shipping targets is already installed in naval mines. At its simplest, developing a hunter-killer UUV would be a matter of putting the smarts of a mine programmed to react to distinctive acoustic signatures into a torpedo – which has already been done. If UUV were to operate at periscope depth, it is plausible that object recognition technology (Treiber, 2010) could be used as warships are large and distinctive objects. Discriminating between a prawn trawler and a patrol boat is far easier than discriminating human targets in counter-insurgency and counter-terrorism operations. There are no visual cues to distinguish between regular shepherds in Waziristan who have beards, wear robes, carry AK-47s, face Mecca to pray etc. and Taliban combatants who look exactly the same. Targeting has to be based on protracted observations of behaviour. Operations against a regular Navy in a conventional war on the high seas would not have such extreme discrimination challenges.

A key difference between the UUV and the UAV is the viability of telepiloting. Existing communications between submarines are restricted to VLF and ELF frequencies because of the properties of radio waves in salt water. These frequencies require large antenna and offer very low transmission rates so they cannot be used to transmit complex data such as video. VLF can support a few hundred bits per second. ELF is restricted to a few bits per minute (Baker, 2013). Thus at the present time remote operation of submarines is limited to the length of a cable. UAVs by contrast can be telepiloted via satellite links. Drones flying over Afghanistan can be piloted from Nevada.

For practical purposes this means the “in the loop” and “on the loop” variants of autonomy would only be viable for tethered UUVs. Untethered UUVs would have to run in “off the loop” mode. Were such systems to be tasked with functions such as selecting and engaging targets, they would need something like Arkin’s ethical governor to provide normative control.

DoD policy directive 3000.09 (Department of Defense, 2012) would apply to the development of any such system by the US Navy. It may be that a Protocol VI of the Convention on Certain Conventional Weapons (CCW) emerges that may regulate or ban “off the loop” lethal autonomy in weapons systems. There are thus regulatory risks involved with projects to develop UUVs capable of offensive military actions.

Even so, in a world in which a small naval power such as Ecuador can knock up a working USV from commodity components for anti-piracy operations (Naval-technology.com, 2013), the main obstacle is not technical but in persuading military decision makers to trust the autonomous options. Trust of autonomous technology is a key issue. As Defense Science Board (2012) puts it:

A key challenge facing unmanned system developers is the move from a hardware-oriented, vehicle-centric development and acquisition process to one that addresses the primacy of software in creating autonomy. For commanders and operators in particular, these challenges can collectively be characterized as a lack of trust that the autonomous functions of a given system will operate as intended in all situations.

There is evidence that military commanders have been slow to embrace unmanned systems. Many will mutter sotto voce: to err is human but to really foul things up requires a computer. The US Air Force dragged their feet on drones and yet the fundamental advantages of unmanned aircraft over manned aircraft have turned out to be compelling in many applications. It is frequently said that the F-35 will be the last manned fighter the US builds. The USAF has published a roadmap detailing a path to “full autonomy” by 2049 (United States Air Force, 2009).

Similar advantages of unmanned systems apply to ships. Just as a UAV can be smaller than a regular plane, so a UUV can be smaller than a regular ship. This reduces requirements for engine size and elements of the aircraft that support human life at altitude or depth. UAVs do not need toilets, galleys, pressurized cabins and so on. In UUVs, there would be no need to generate oxygen for a crew and no need for sleeping quarters. Such savings would reduce operating costs and risks to the lives of crew. In war, as the Spanish captains said: victory goes to he who has the last escudo. Stress on reducing costs is endemic in military thinking and political leaders are highly averse to casualties coming home in flag-draped coffins. If UUVs can effectively deliver more military bang for less bucks and no risk to human crews, then they will be adopted in preference to crewed alternatives as the capabilities of vehicles controlled entirely by software are proven.

Such a trajectory is arguably as inevitable as that of Garry Kasparov vs Deep Blue. However in the shorter term, it is not likely that navies will give up on human crews. Rather UUVs will be employed as “force multipliers” to increase the capability of human crews and to reduce risks to humans. UUVs will have uncontroversial applications in mine counter measures and in intelligence and surveillance operations. They are more likely to be deployed as relatively short range weapons performing tasks that are non-lethal. Submarine launched USVs attached to their “mother” subs by tethers could provide video communications of the surface without the sub having to come to periscope depth. Such USVs could in turn launch small UAVs to enable the submarine to engage in reconnaissance from the air. The Raytheon SOTHOC (Submarine Over the Horizon Organic Capabilities) launches a one-shot UAV from a launch platform ejected from the subs waste disposal lock . Indeed small UAVs such

as Switchblade could be weaponized with modest payloads and used to attack the bridges or rudders of enemy surface ships as well as to increase the range of the periscope beyond the horizon. Future aircraft carriers may well be submarine.

In such cases, the UUV, USV and UAV “accessories” to the human crewed submarine would increase capability and decrease risks. As humans would pilot such devices, there are no requirements for an “ethical governor” though such technology might be installed anyway to advise human operators and to take over in case the network link failed.

However, a top priority in naval warfare is the destruction or capture of the enemy. Many say that it is inevitable that robots will be tasked with this mission and that robots will be at the front line in future wars. The key factors will be cost, risk, reliability and capability. If military capability can be robotized and deliver the same functionality at similar or better reliability and at less cost and less risk than human alternatives, then in the absence of a policy prohibition, sooner or later it will be.

Sean Welsh is a Doctoral Candidate in Robot Ethics at the University of Canterbury. His professional experience includes 17 years working in software engineering for organizations such as British Telecom, Telstra Australia, Fitch Ratings, James Cook University and Lumata. The working title of Sean’s doctoral dissertation is “Moral Code: Programming the Ethical Robot.”

References

Arkin, R. C. (2009). Governing Lethal Behaviour in Autonomous Robots. Boca Rouge: CRC Press.

Baker, B. (2013). Deep secret – secure submarine communication on a quantum level. Retrieved 13th May, 2015, from http://www.naval-technology.com/features/featuredeep-secret-secure-submarine-communication-on-a-quantum-level/

Defense Science Board. (2012). The Role of Autonomy in DoD Systems. from http://fas.org/irp/agency/dod/dsb/autonomy.pdf

Department of Defense. (2012). Directive 3000.09: Autonomy in Weapons Systems. Retrieved 12th Feb, 2015, from http://www.dtic.mil/whs/directives/corres/pdf/300009p.pdf

Navaldrones.com. (2015). Switchblade UAS. Retrieved 28th May, 2015, from http://www.navaldrones.com/switchblade.html

Naval-technology.com. (2013). No hands on deck – arming unmanned surface vessels. Retrieved 13th May, 2015, from http://www.naval-technology.com/features/featurehands-on-deck-armed-unmanned-surface-vessels/

Treiber, M. (2010). An Introduction to Object Recognition: Selected Algorithms for a Wide Variety of Applications. London: Springer.

United States Air Force. (2009). Unmanned Aircraft Systems Flight Plan 2009-2047. Retrieved 13th May, 2015, from http://fas.org/irp/program/collect/uas_2009.pdf

Reprinted with permission from the Center for International Maritime Security.

Comments

Post a Comment